How XR Works

0 916

🔎 Intro — What "How XR Works" really means

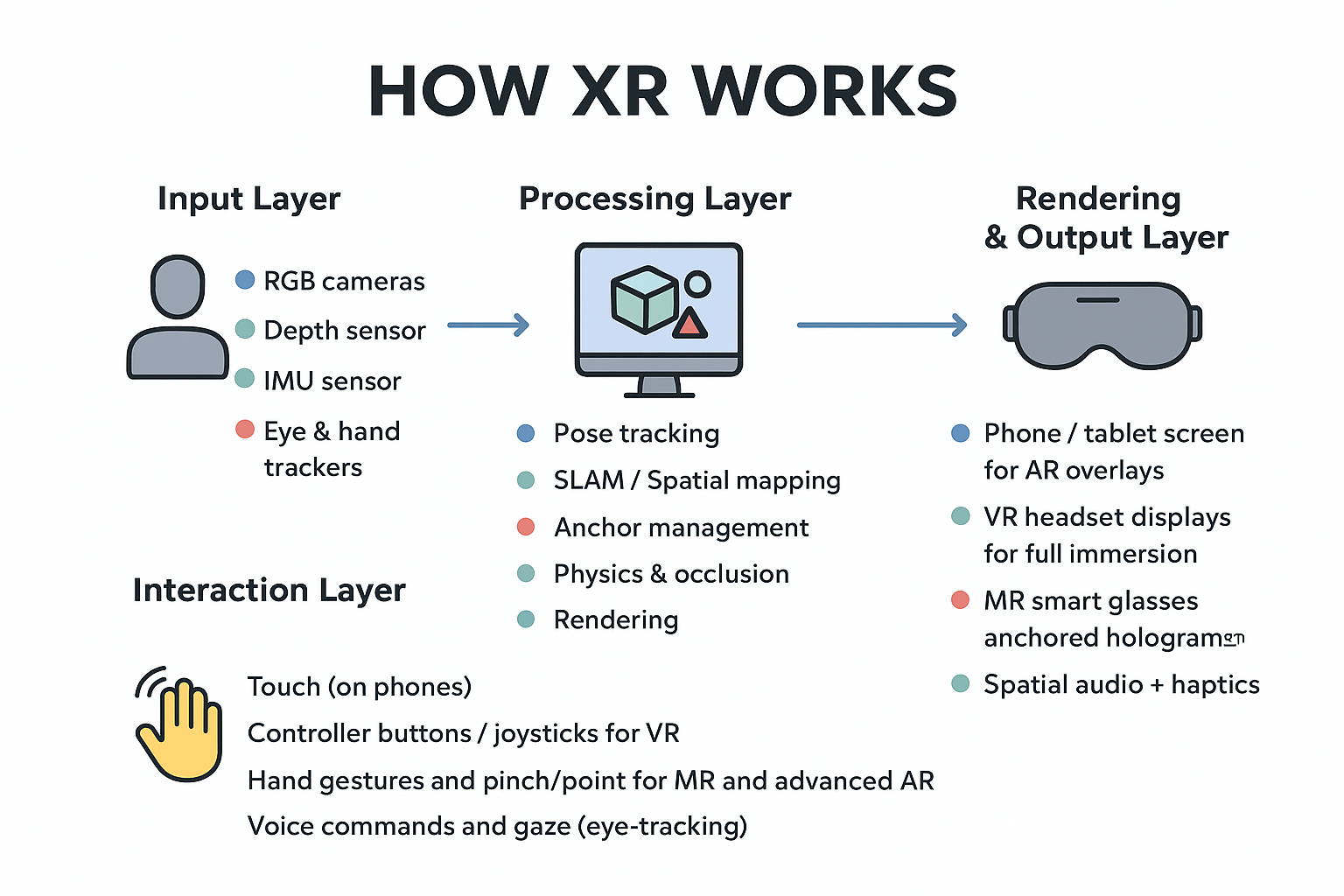

Extended Reality (XR) is the umbrella that covers Augmented Reality (AR), Virtual Reality (VR), and Mixed Reality (MR). This guide breaks down how XR systems sense the world, compute a digital representation, render visuals, and let people interact — all in simple, hands-on language.🧠Input Layer — Sensors & environment capture

XR starts by reading the physical world. Devices use multiple sensors to build a live model of the environment:- RGB cameras (visual feed)

- Depth sensors / LiDAR (distance and shape)

- IMU: accelerometer + gyroscope (motion & orientation)

- Microphones (sound for spatial audio / voice control)

- Eye & hand trackers (interaction intent)

âš™ï¸ Processing Layer — The XR engine

Sensor data flows into an engine (Unity, Unreal, WebXR, ARKit/ARCore). The engine does:- Pose tracking — where the user and device are in 3D space

- SLAM / spatial mapping — building a 3D mesh of surroundings

- Anchor management — keeping virtual objects fixed to real-world spots

- Physics & occlusion — making virtual objects behave believably

- Rendering — turning the scene into frames the display shows

ðŸ–¥ï¸ Rendering & Output Layer — Displays and feedback

Depending on the device, output may be:- Phone/tablet screen for AR overlays

- VR headset displays for full immersion

- MR smart glasses for anchored holograms

- Spatial audio + haptics for richer presence

🤠Interaction Layer — How users manipulate XR

XR supports many input modes. Common ones:- Touch (on phones)

- Controller buttons / joysticks (VR)

- Hand gestures and pinch/point (MR and advanced AR)

- Voice commands and gaze (eye-tracking)

🔠The real-time loop — simplified pseudocode

Here’s a minimal conceptual loop showing how an XR app keeps the experience synced with the real world:// Pseudocode: main XR loop

initializeSensors()

initializeXRSession()

while (xrSessionRunning) {

sensorData = readSensors() // cameras, IMU, depth

pose = computePose(sensorData) // head & device position

worldMesh = updateSpatialMap(sensorData)

updateAnchors(worldMesh)

userInput = readInput() // hands, controllers, voice

simulatePhysics(userInput, worldMesh)

frame = renderFrame(pose, worldMesh) // mix virtual with real (or replace)

display(frame)

}

shutdownXRSession()

🧪 WebXR example — request AR session (concept)

This is a short, conceptual snippet demonstrating how a web app might start an AR session. Real implementations require HTTPS and feature detection.if (navigator.xr) {

const supported = await navigator.xr.isSessionSupported('immersive-ar');

if (supported) {

const session = await navigator.xr.requestSession('immersive-ar', {

requiredFeatures: ['local', 'hit-test']

});

// attach WebGL layer, set up frame loop and hit-tests to place anchors

} else {

console.log('AR not supported on this device');

}

} else {

console.log('WebXR not available');

}

ðŸ•¶ï¸ A-Frame quick VR scene — copy & open

A-Frame gives a low-effort way to create quick VR scenes. Save this as index.html and open in a modern browser.<!doctype html>

<html>

<head>

<meta charset="utf-8">

<script src="https://aframe.io/releases/1.4.0/aframe.min.js"></script>

<title>Simple A-Frame Scene</title>

</head>

<body>

<a-scene>

<a-box position="-1 0.5 -3" rotation="0 45 0" color="#4CC3D9"></a-box>

<a-sphere position="0 1.25 -5" radius="1.25" color="#EF2D5E"></a-sphere>

<a-plane position="0 0 -4" rotation="-90 0 0" width="6" height="6"></a-plane>

<a-sky color="#ECECEC"></a-sky>

</a-scene>

</body>

</html>

🔧 MR in practice — a brief case study

Example: a field technician uses MR glasses to repair a pump. The glasses:- Scan the pump and detect parts (camera + model matching)

- Place persistent anchors on bolts and valves

- Show step-by-step overlays and virtual tools

- Log actions to a remote server for audit and training

🔮 Performance & UX concerns

- Latency: keep motion-to-photon under ~20ms for comfort

- Occlusion: virtual objects must appear behind or in front of real objects correctly

- Consistency: anchors should persist between sessions when needed

- Battery & thermal: mobile XR is power-sensitive

âœï¸ Wrap-up — quick checklist

- Sensors → Engine → Render → Display is the core pipeline

- AR overlays the real world, VR replaces it, MR anchors interactive virtual objects

- XR is the family name; pick tech based on immersion needs, latency budget, and device constraints

If you’re passionate about building a successful blogging website, check out this helpful guide at Coding Tag – How to Start a Successful Blog. It offers practical steps and expert tips to kickstart your blogging journey!

For dedicated UPSC exam preparation, we highly recommend visiting www.iasmania.com. It offers well-structured resources, current affairs, and subject-wise notes tailored specifically for aspirants. Start your journey today!

Share:

Comments

Waiting for your comments