XR System Components

0 919

🔧 Intro — What "XR System Components" actually means

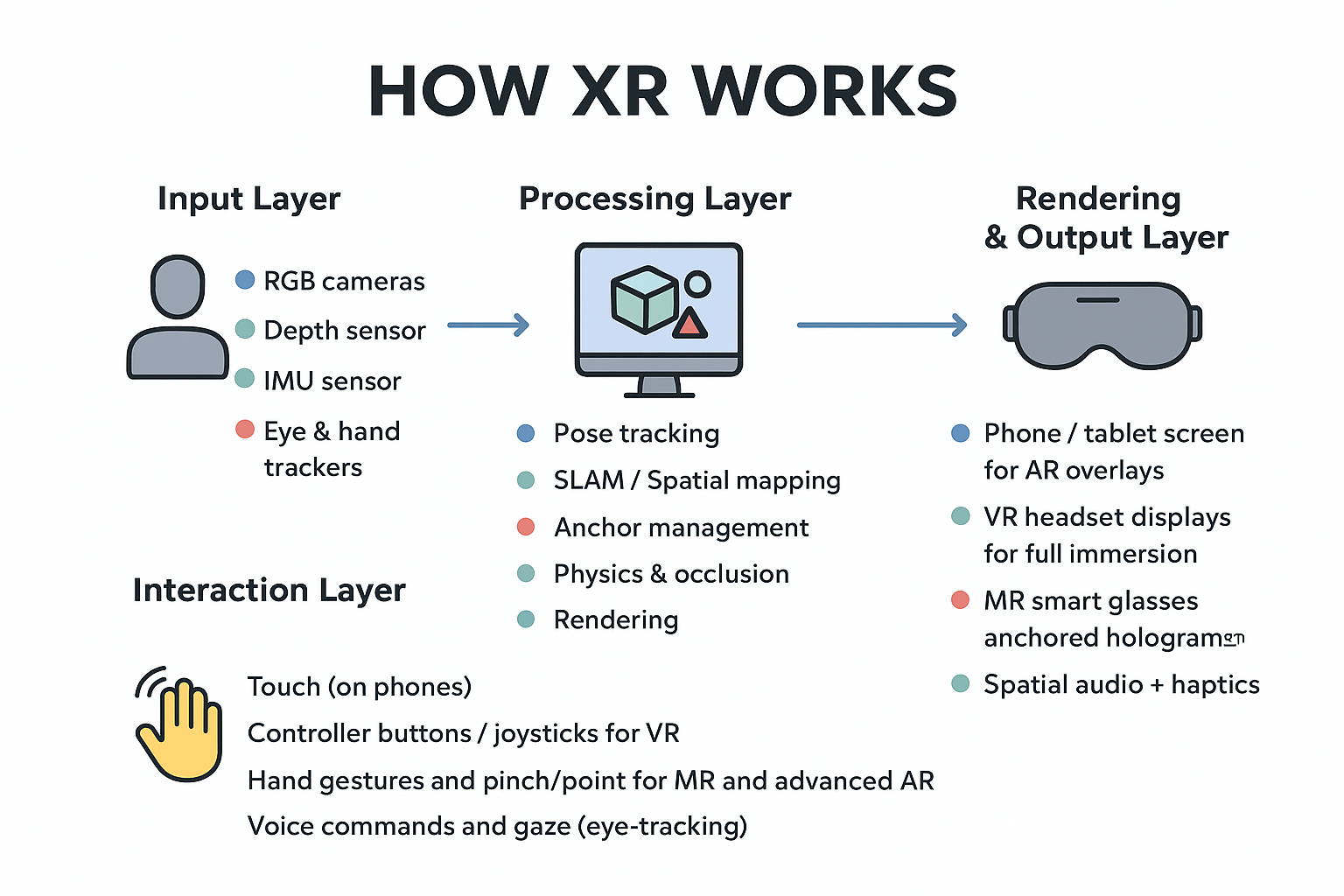

When people say XR System Components, they mean the hardware and software parts that make extended reality happen. Think of it as a recipe: sensors collect the ingredients, the engine mixes them, and displays + interaction devices serve the final dish to the user.📷 Sensors — the input eyes and ears

Sensors are the frontline: they observe the real world so the XR system can understand where things are and how they're moving. Common sensors include:- RGB cameras — color images and scene understanding

- Depth cameras / LiDAR — distance and geometry information

- IMU (accelerometer + gyroscope) — device orientation and motion

- Microphones — voice input and spatial audio capture

- Eye & hand trackers — fine-grained interaction intent

🧠Processing units — the brain

Raw sensor data is noisy and heavy. Processing units run the algorithms that turn sensor streams into useful state: pose, maps, anchors, and physics. Options include:- Mobile SoC (phone / tablet)

- High-performance CPU + GPU (PC / tethered headset)

- Dedicated XR chips / NPUs (emerging devices)

âš™ï¸ XR Engine & Middleware — the software glue

Engines (Unity, Unreal, WebXR stacks, ARKit/ARCore) orchestrate everything: they fuse sensor input, run SLAM, manage anchors, simulate physics, and schedule rendering. Middleware may add networking, cloud anchors, or optimization layers.ðŸ–¥ï¸ Rendering pipeline — how visuals are made

Rendering transforms 3D geometry and materials into pixels shown on a display. Important topics here:- Stereo rendering (left + right eye)

- Latency minimization (motion-to-photon)

- Occlusion: real objects should block virtual ones correctly

- Lighting & shading for believable blends

ðŸ•¶ï¸ Displays & output devices — where the user looks

Output hardware determines the user's view and comfort:- Phone/tablet screens (common for AR)

- VR headsets (fully immersive)

- MR smart glasses (transparent displays with anchored holograms)

- Spatial audio systems & haptic devices (sound + touch feedback)

🤠Interaction devices — how users act

Interaction turns sensory input into control signals for the XR app:- Controllers (buttons, joysticks)

- Hand tracking (pinch, grab, gesture)

- Eye gaze and voice commands

- Foot or body tracking for full-body experiences

🔠Data flow — the real-time loop (concept)

Below is a compact pseudocode showing the continuous loop XR systems run to stay in sync with the world.// XR main loop (conceptual)

initSensors()

initXRSession()

while (sessionActive) {

frames = captureSensorFrames() // camera, depth, IMU

pose = estimatePose(frames) // head & device position

sceneMap = updateSpatialMap(frames) // SLAM / environment mesh

updateAnchors(sceneMap) // persistent object positions

input = readInteractionDevices() // hands, controllers, voice

applyPhysics(input, sceneMap) // collisions, forces

frameLeft, frameRight = renderStereo(pose, sceneMap)

presentToDisplay(frameLeft, frameRight)

}

shutdownXRSession()

🌠WebXR snippet — start an AR session (concept)

This is a short example to request an immersive-ar session in a browser. Real deployments require HTTPS, feature checks and correct rendering setup.if (navigator.xr) {

const supported = await navigator.xr.isSessionSupported('immersive-ar');

if (supported) {

const session = await navigator.xr.requestSession('immersive-ar', { requiredFeatures: ['hit-test'] });

// connect WebGL layer, run frame loop, perform hit-tests to place anchors

} else {

console.log('AR not supported on this device');

}

} else {

console.log('WebXR API not available');

}

🧪 Small A-Frame demo — quick VR test

Use this tiny A-Frame scene to play with a minimal VR environment in your browser.<!doctype html>

<html>

<head>

<meta charset="utf-8">

<script src="https://aframe.io/releases/1.4.0/aframe.min.js"></script>

<title>Tiny A-Frame XR</title>

</head>

<body>

<a-scene>

<a-box position="-1 0.5 -3" rotation="0 45 0" color="#4CC3D9"></a-box>

<a-sphere position="0 1.25 -5" radius="1.25" color="#EF2D5E"></a-sphere>

<a-plane position="0 0 -4" rotation="-90 0 0" width="6" height="6"></a-plane>

<a-sky color="#ECECEC"></a-sky>

</a-scene>

</body>

</html>

🔠Practical tips — what actually matters

- Latency — lower is always better for comfort.

- Robust SLAM — stable anchors make MR believable.

- Occlusion & lighting — crucial for natural blends between real and virtual.

- Power & thermals — mobile XR must balance performance vs battery heat.

🧩 Putting it together — an example use-case

Imagine a remote-support MR app for field technicians: cameras + depth sensors scan a machine, SLAM anchors a virtual instruction overlay, the rendering engine displays step-by-step holograms on the technician’s glasses, and voice + gesture lets the technician confirm each step. The system logs actions to a cloud service for auditing and training.âœï¸ Wrap-up — quick checklist

- Sensors capture the world; processors convert that into position and maps.

- XR engines fuse data, manage anchors, run physics and render frames.

- Displays and interaction devices shape the user experience and comfort.

- Performance trade-offs (latency, power, accuracy) drive real design choices.

If you’re passionate about building a successful blogging website, check out this helpful guide at Coding Tag – How to Start a Successful Blog. It offers practical steps and expert tips to kickstart your blogging journey!

For dedicated UPSC exam preparation, we highly recommend visiting www.iasmania.com. It offers well-structured resources, current affairs, and subject-wise notes tailored specifically for aspirants. Start your journey today!

Share:

Comments

Waiting for your comments