XR Interaction Models

0 854

🧠Introduction to XR Interaction Models

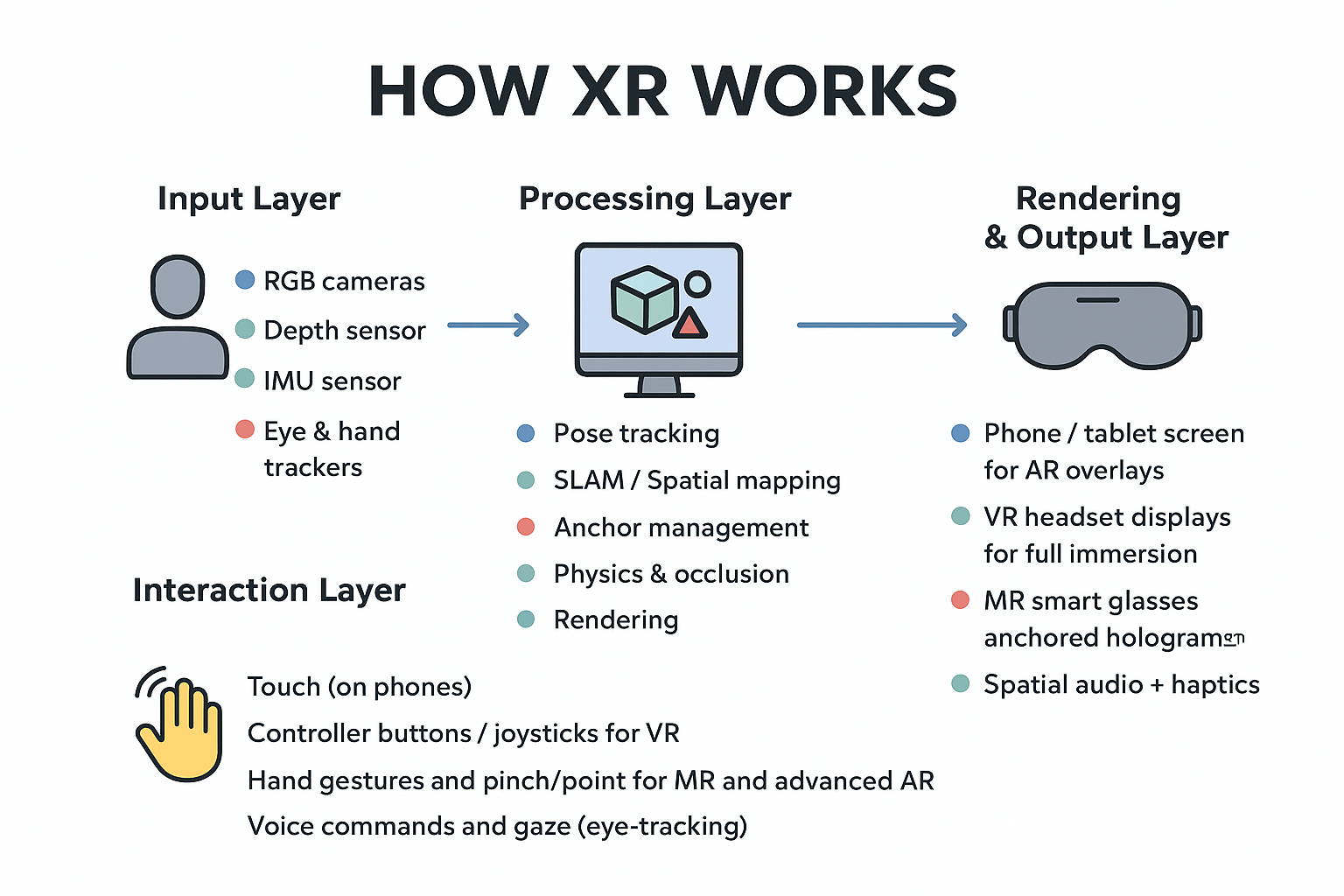

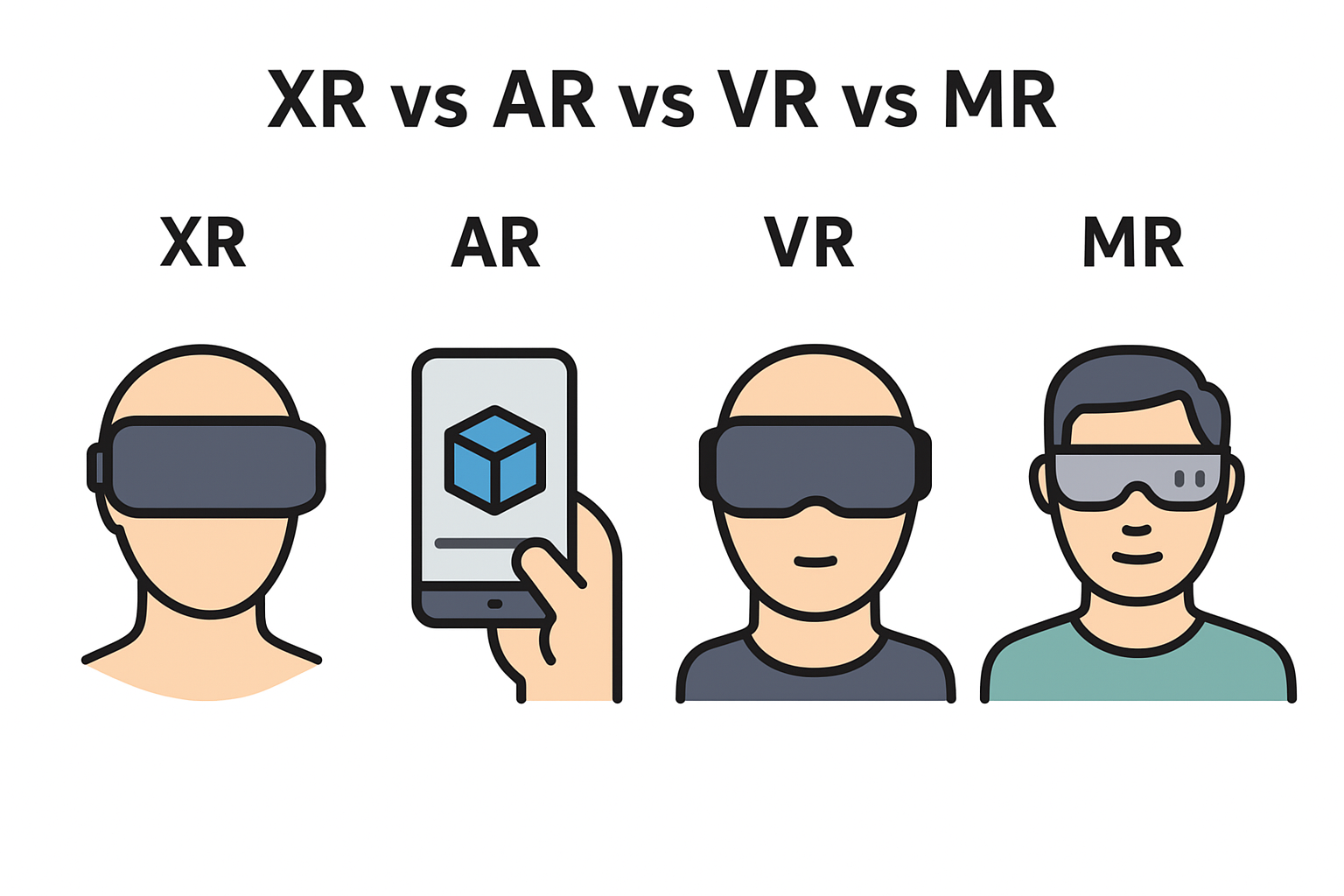

XR Interaction Models define how users naturally communicate with virtual, augmented, and mixed reality environments. Instead of relying only on buttons or screens, modern XR experiences use gestures, voice, body movement, eye tracking, and spatial awareness to make interactions feel human and intuitive. A good interaction model can transform a simple virtual scene into a believable world.ðŸ–ï¸ Gesture & Hand-Tracking Based Interaction

Hand tracking allows users to touch, grab, rotate, push, or swipe objects in a virtual world just like real life. This model is widely used in VR and MR because it removes the need for physical controllers and enhances natural immersion.

function detectHandGesture(gesture) {

if (gesture === "grab") return "Object picked up";

if (gesture === "pinch") return "Selection activated";

return "Unknown gesture";

}

🎤 Voice-Driven Interaction

Voice commands make XR more accessible and hands-free. Users can open menus, control tools, switch scenes, or trigger actions simply by speaking. This model becomes extremely useful in enterprise workflows, training modules, and productivity apps.

if (voiceInput === "start simulation") {

startXRTraining();

}

🎮 Controller-Based Interaction

Controllers remain one of the most reliable XR interaction methods. They offer tactile feedback, precise pointing, and fast inputs—ideal for gaming, complex tasks, and high-speed simulations. Many users still prefer controllers for accuracy.

let triggerValue = controller.getButton("trigger");

console.log("Trigger state:", triggerValue);

👀 Gaze & Eye-Tracking Interaction

Eye-tracking introduces a subtle but powerful interaction technique—users simply look at an object to highlight or select it. Combined with small gestures or voice commands, gaze-based interactions reduce effort and create faster workflows.📠Spatial & Environmental Interaction

Spatial interaction relies on the user’s real-world environment. Devices track surfaces, distances, objects, and room geometry. This enables users to place virtual items on tables, move around naturally, hide behind objects, or interact dynamically with mixed spaces.⚡ Multimodal Interaction Models

The most advanced XR systems combine multiple inputs—hand tracking + voice + gaze + body movement + environmental mapping. This fusion allows apps to respond in the most natural way depending on what users are doing at the moment.

if (gazeTarget === "button" && gesture === "pinch") {

activateButton();

}

🔗 Object-Based & Direct Manipulation Models

Direct manipulation allows users to interact with objects exactly as they would in reality—lifting a box, turning a knob, sliding a lever, or opening a door. This interaction style is closest to natural human behavior and enhances immersion drastically.🚀 Final Thoughts on XR Interaction Models

XR Interaction Models continue to evolve as hardware becomes more intelligent and intuitive. The future of XR lies in frictionless interaction—where people can interact with digital worlds just by moving, speaking, looking, or thinking. A well-designed interaction model is the backbone of every engaging XR experience.If you’re passionate about building a successful blogging website, check out this helpful guide at Coding Tag – How to Start a Successful Blog. It offers practical steps and expert tips to kickstart your blogging journey!

For dedicated UPSC exam preparation, we highly recommend visiting www.iasmania.com. It offers well-structured resources, current affairs, and subject-wise notes tailored specifically for aspirants. Start your journey today!

Share:

Comments

Waiting for your comments